Cross-layer transcoders in a battery foundation model

Background

Sparse autoencoders (SAEs) and sparse transcoders help us understand the fundamental variables of computation by decomposing activations into discrete features.

LBLM (“Large Battery Language Model”) is a time-series foundation model for battery data. It is a transformer-based, auto-regressive, causal decoder-only model which is pre-trained to predict the voltage at the next time-step given the current and amount of time elapsed since the previous time-step. It is completely analogous to modern LLM architectures except the input space at every time-step has order 10 dimensions, rather than e.g. 50k dimensions for a model with 50k unique tokens in its vocabulary, and the final output is continuous/numerical rather than discrete/classification.

Transcoder implementation and training

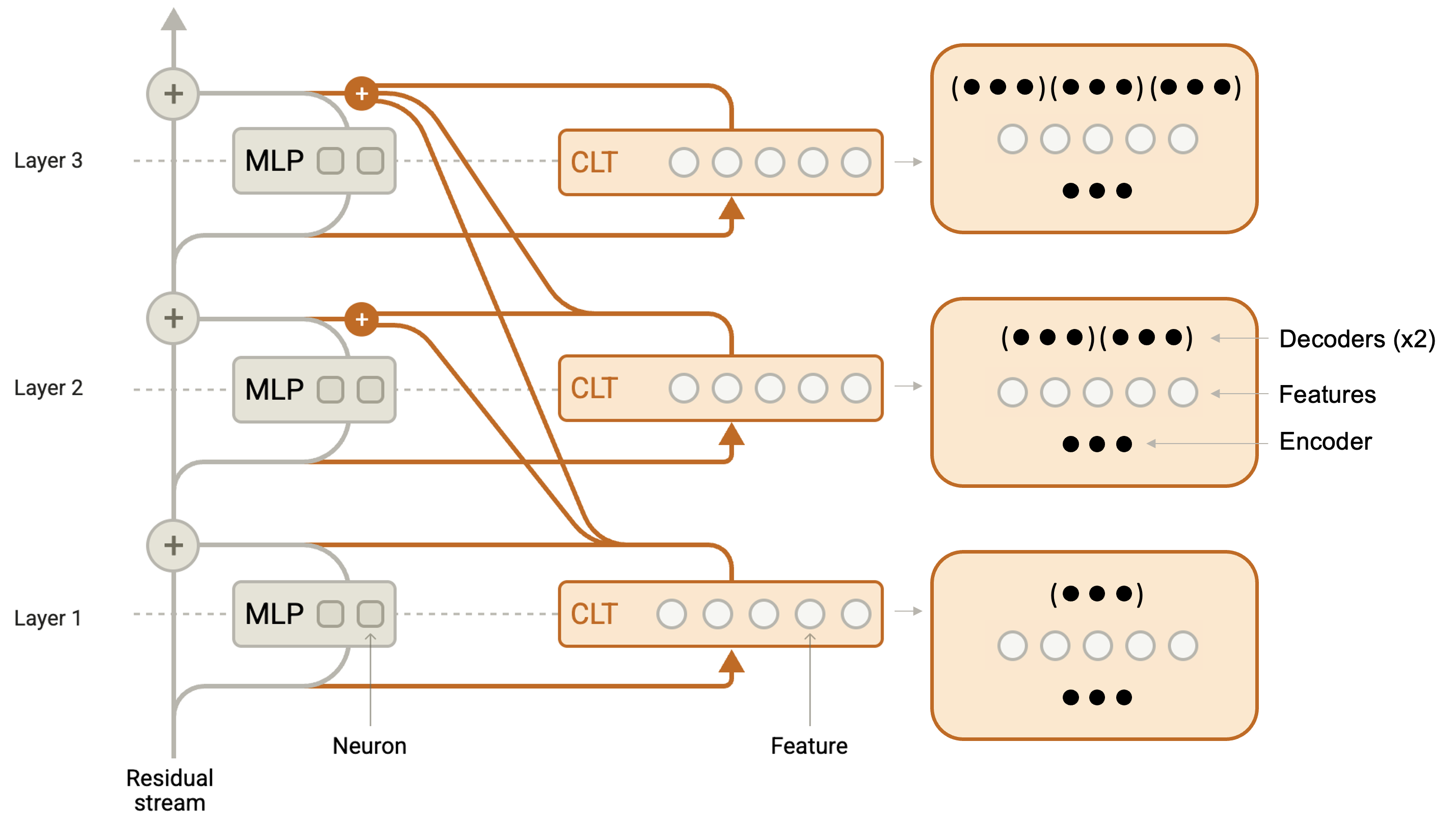

I trained a sparse cross-layer transcoder to learn interpretable features inside LBLM using the loss function $L = \textrm{MSE} + \lambda L_1$, where $\textrm{MSE}$ refers to the mean-squared-error of the reconstruction of the activations, $\lambda$ is the sparsity coefficient hyperparameter, and $L_1$ is the one-norm of the feature tensor $F$ across the layer, sequence, and feature dimensions. 1 A encoder-ReLU-decoder setup was used to reconstruct the effect of the MLPs. There was a single encoder for each layer, and thus a single set of features at each layer. There were $l$ decoders at layer $l$. The figure below is an expanded version of the figure from the transformer circuits thread which clearly shows the number of encoders, features, and decoders per layer2.

I opted to implement my own transcoders, in part because of the lack of a cross-layer transcoder implementation in TransformerLens and SAELens, in part because I wanted the experience of implementing it myself, and in part because I wanted transcoder training to be maximally compatible with the existing LBLM training codebase.

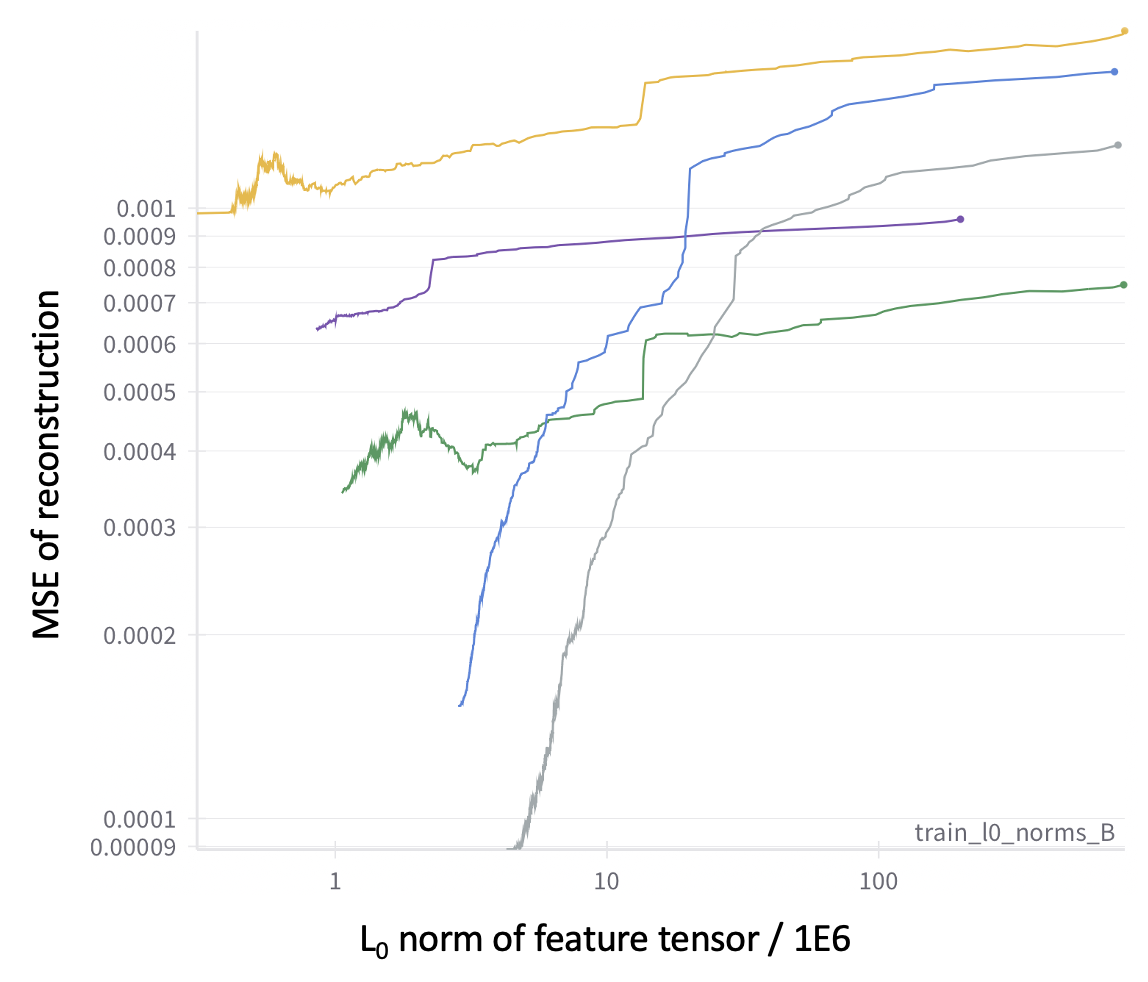

The Pareto frontier of performance in the (MSE of reconstruction, L0 of feature tensor) plane is shown below. All runs start in the upper-right and proceed to the bottom-left during training. It is clear that there is a substantial tradeoff between reconstruction accuracy and sparsity.

I trained more than 40 cross-layer transcoders in total. The main exploration was the effect of the following hyperparameters:

- Number of features per layer and sequence position (from 1024 to 8192; larger would have been beneficial but I wanted to keep the model fitting on a single H100).

- Sparsity coefficient $\lambda$, both its value and ramping schedule

- Learning rate Transcoders were trained on order ~500 million tokens.

Feature extraction and interpretation

For feature analysis and identification, I used the transcoders trained in the green trace above. My criteria was to have minimal reconstruction error while having about 100 active features per sequence position (i.e. about 0.8192 on the x-axis of the figure; the purple transcoder would have been a reasonable choice as well.)

I began the process of identifying which features were most active on a prompt of interest. I ran the transcoder on the prompt and extracted the top 100 features with the largest activations as tuples of (layer, feature).

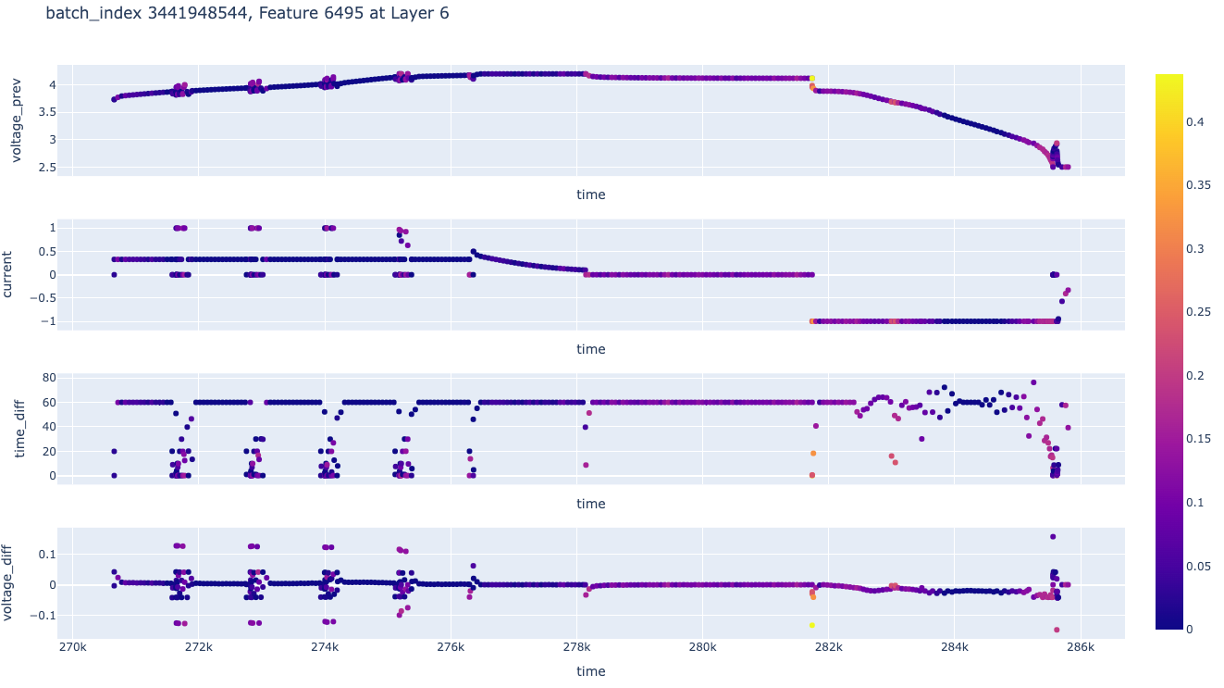

I then ran the transcoder on the training set and recorded sequences which strongly activated each of these important features. I then visually examined the sequences which strongly activated the most important features to try to assign an interpretation to each of these important features. An example of a sequence is shown below, where the color at each sequence point is the activation of the feature at that point, and four key sequence dimensions are plotted versus time:

There are three immediate bottlenecks with feature interpretation for this type of time-series model:

- Battery time-series data has at least three important dimensions (voltage, current, time) which must be visualized simultaneously. Additionally, meaningful patterns only emerge over long portions of sequences (the plot above shows 400 sequence points, and the total sequence length is 8192). These two facts makes visualizations quite large, as in the plot above for a single sequence.

- Battery time-series are less human-interpretable than text, even for a battery domain expert. While I was able to identify various features which seemed to be associated with e.g. certain states-of-charge and discharge protocols, manually inspecting dozens of sequences to understand what they have in common is very time-consuming and tedious.

- An automated feature interpretation algorithm does not exist. There is no immediate time-series analogue of asking a LLM to summarize what is in common among a set sequences which strongly activate a given feature.

Given these bottlenecks, I decided to not proceed further with this project.

Notes / References

-

Concretely, F is a tensor with shape (24, 8192, $n_F$) where the number of features $n_F$ per layer per sequence position ranges from 1024 to 8192. ↩

-

The setup was largely similar to https://transformer-circuits.pub/2025/attribution-graphs/methods.html, with the main differences being the use of ReLU instead of JumpReLU and using $L_1$ as the sparsity penalty instead of the $\tanh$ based penalty ↩

Enjoy Reading This Article?

Here are some more articles you might like to read next: