A foundation model for battery data

The following is a technical whitepaper on an autoregressive transformer-based timeseries foundation model for battery data I built at Chemix. It was a customer-facing document, and as such is written for an audience with no ML background (and is a little more “sales-y”).

Attribution: I came up with the idea, wrote much of the original code, and pitched it to gather interest from potential customers. After the original MVP it was scaled and matured by my team at Chemix.

Introduction

Despite years of research, batteries still behave like “black box” systems, with very little observability or predictability. Chemix has developed an industry-first, proprietary Large Battery Language Model (LBLM) to illuminate the battery black box once and for all.

LBLM is a self-supervised transformer-based generative foundation model for battery data. LBLM enables battery engineers, fleet managers, and energy storage system operators to extract key insights into battery operation, health, safety, and residual life through virtual “experiments” which do not disrupt battery operation or require expensive teardowns or physical battery testing channels.

Any battery cell can be simulated under any hypothetical operating condition at any point in its life. This predictive capability unlocks a step-change in value for the entire battery value chain.

Battery Language

All battery operation, and all battery tests - whether to evaluate performance, safety, health, or lifetime - are fundamentally the same. A time-varying load is applied to the battery which draws a certain amount of current from the battery as a function of time. As the current is applied, the battery either charges or discharges (by convention, we consider discharge to be negative current, and charge to be positive current) and its internal state changes. This change in internal state typically results in a corresponding change in voltage, temperature, and other internal parameters. Thus the “language” of battery operation and testing is that of a time-series: current, voltage, temperature, etc. as a function of time.

The response of a battery to a given current profile depends on the state of the battery at the moment the profile is applied. This state is determined by the battery design (chemistry, form factor, electrode materials, electrolyte formulation, etc.) and the historical operation conditions for the battery (as batteries degrade as they are used).

Typically battery tests are conducted by connecting the battery to a physical battery tester and applying a varying current profile. Such physical battery tests can take hours, months, or even years, depending on the test.

Capabilities

LBLM eliminates physical battery testing by allowing tests to be simulated virtually. Just as a prompt containing relevant information can be passed to a LLM application such as ChatGPT or Claude to generate a text or image response, battery “language” data (voltage, current, temperature, etc. time-series) can be passed to LBLM to generate a battery language response. The response generated by LBLM simulates the result of conducting a test on that battery.

prompt = historical battery data + current profile –> predicted output

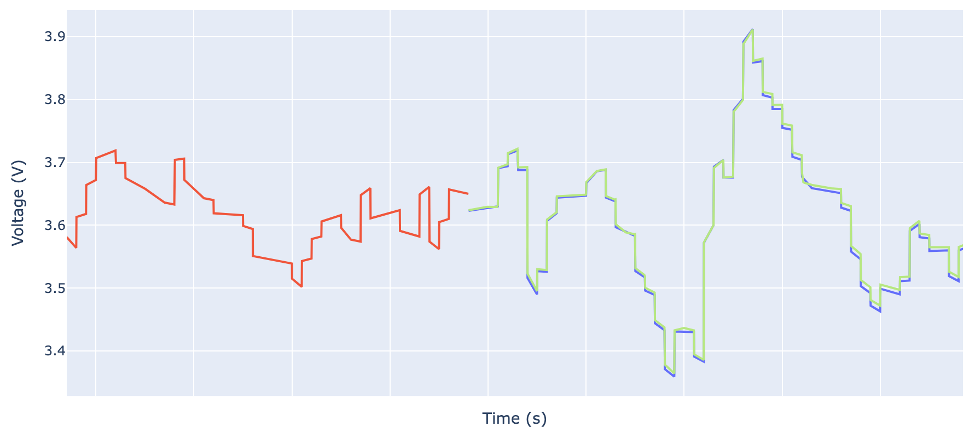

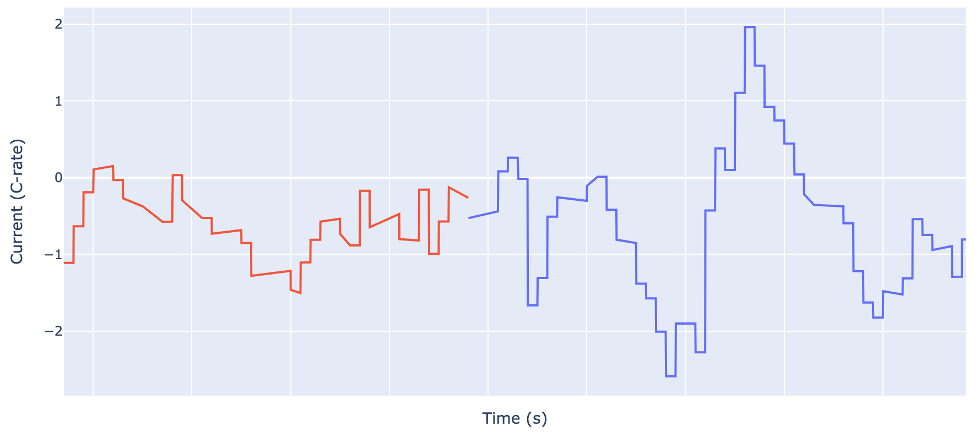

The figure below shows an example for an urban EV drive cycle. The red trace is the historical battery data which is passed to the prompt, the blue trace is the actual current profile which was applied after this point, and the green trace is the simulated profile predicted by LBLM.

The simulated profile (green) was generated by LBLM from the historical battery data (red) and the current profile corresponding to the next steps in the drive cycle (blue), shown below.

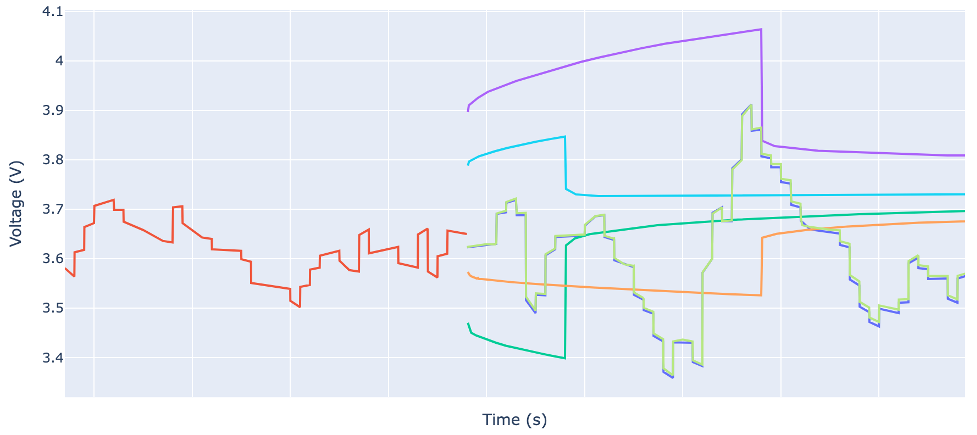

LBLM can be used to conduct a variety of tests on a battery simultaneously by providing different current profiles. For example, the figure below shows a variety of virtual hybrid-pulse-power-characterization (HPPC) tests being conducted on the same battery to calculate internal resistance, all starting from the same starting point at the end of the historical data.

Each color trace besides red and blue are simulated test results. From these virtual HPPC tests and virtual full charge and discharge tests at different C-rates, we can calculate a variety of state-of-health metrics shown in the table below.

| State-of-Health Metric | Test Parameters | Result |

|---|---|---|

| DCIR | 10s, 1C, charge | 0.093 Ohm * Ah |

| DCIR | 30s, 2C, charge | 0.128 Ohm * Ah |

| DCIR | 30s, 1C, discharge | 0.185 Ohm * Ah |

| DCIR | 10s, 2C, discharge | 0.162 Ohm * Ah |

| Capacity Retention | Constant current, 0.5C | 0.65 (normalized to nominal) |

| Capacity Retention | Constant current, 1C | 0.51 (normalized to nominal) |

| Capacity Retention | Constant current, 2C | 0.25 (normalized to nominal) |

The results of these virtual experiments indicate that the battery at this particular point in its lifetime is substantially degraded, with only 65% capacity retention at low rate.

Model

LBLM leverages the latest advancements in model architectures, training, and compute, such as flash self-attention for the attention heads, SwiGLU activations for the feedforward layers, and schedule-free AdamW optimization.

In addition to a highly expressive neural network architecture and efficient training, LBLM leverages the physics-informed neural network (PINN) framework to ensure that simulated experiments are consistent with known battery physics. This powerful inductive bias improves generalization and learning efficiency. In addition, the auxiliary dynamical variables learned along with the directly-observable battery outputs produce extra information about the battery state.

Data

At Chemix, we’ve been collecting high-quality lifetime data on more than 15,000 cells for over three years. We have more than 2000 test channels, some of which can apply up to 1000A in current. Collectively the cells in our proprietary dataset have cycled more than 5M cycles. Below is a list of parameters summarizing our dataset.

- Form factor: pouch, prismatic, cylindrical

- Capacity: 1Ah through 175Ah

- Cathode: NMC (many different Ni:Mn:Co ratios) , LFP, LFMP (many different Fe:Mn ratios)

- Anode: Graphite, SiOx, SiC

- Cell designs: hundreds of different combinations of electrode loadings, porosities, etc.

- Suppliers: dozens of different active material suppliers and cell vendors

- Cycling protocols: cycle life tests, rate tests, DCIR tests, drive cycle tests

- Data collection hardware: battery cyclers, battery management system, and Chemix proprietary sensing hardware.

This dataset comprises more than 8B timesteps (“tokens”) and is actively growing every day.

Results

Voltage prediction accuracy

LBLM is highly accurate across a wide variety of cells. We have achieved an error rate of less than 1mV RMSE for next-voltage prediction across a test set of more than 2000 cells, spanning hundreds of unique combinations of the attributes in list of parameters above.

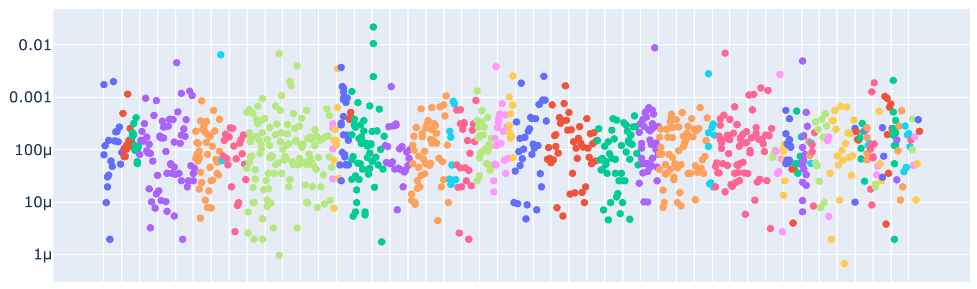

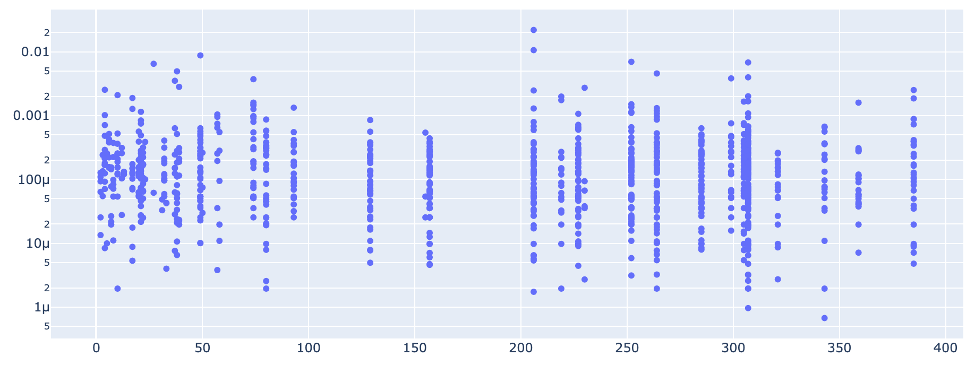

LBLM is not just memorizing the training set, but is truly able to generalize. The next-voltage prediction error rate across the test set is low regardless of how many potentially-similar cells are in the training set. The figure below shows how the error rate varies with the number of cells in the training set with the same anode, cathode, and form factor. The figure shows that the error is low even with only a handful of similar cells in the training set.

The accuracy of simulated profiles across entire charge or discharge segments can be even more instructive than next-voltage prediction accuracy. Average voltage error for these entire steps is less than 2mV across the same test set.

State-of-health prediction

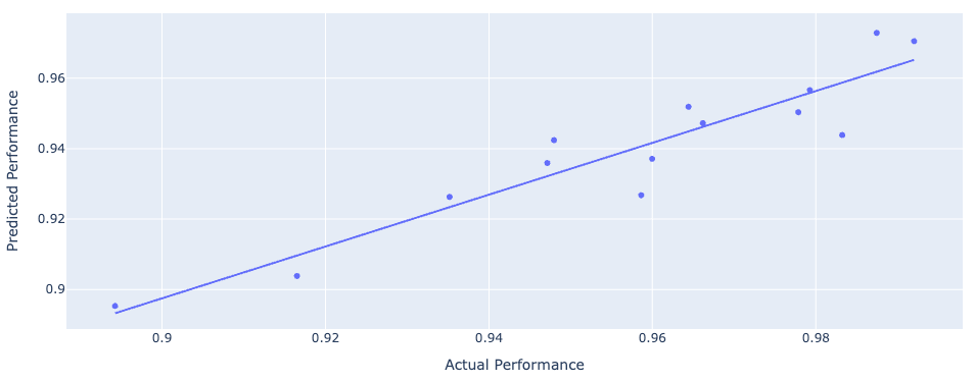

One of the most useful applications of LBLM is predicting the state-of-health of a battery from just its operating data: no lab checkup tests or reference-performance tests (RPTs) required. The figure below shows the accuracy in predicting state-of-health for a set of 14 cells performing 5 distinct drive cycles.

Summary

- LBLM is an industry-first generative foundation model capable of delivering value across a variety of use cases, from cell development to cell qualification to health and life estimation, by enabling virtual cell testing and evaluation.

- LBLM is based on the same modern generative AI architectures as LLMs such as ChatGPT and Claude, and is trained on a industry-leading corpus of proprietary data.

- LBLM achieves impressive accuracy across a wide variety of cells and use-cases.

Enjoy Reading This Article?

Here are some more articles you might like to read next: